Talos

Hardware accelerator for convolutional neural networks

February 23rd, 2026

The Project

Talos is a custom FPGA-based hardware accelerator built from the ground up to execute Convolutional Neural Networks with extreme efficiency. It isn't just a reimplementation of existing software logic in hardware; it is a rethinking of how deep learning inference should work at the circuit level.

Most deep learning frameworks are built for flexibility. They handle dynamic graphs, varying batch sizes, and a multitude of layer types. Talos takes the opposite approach. It strips away the runtime, the scheduler, and the operating system overhead to expose the raw compute capability of the FPGA. By implementing the entire inference pipeline in SystemVerilog, we achieve deterministic, cycle-accurate control over every calculation.

The Reality of Hardware

But don’t let the two-week timeline fool you. Those were two weeks full of 18-hour days, fueled by caffeine and sheer stubbornness. Building hardware is a completely different game from software.

In software, if something breaks, you check the logs, you add a print statement, and you recompile in seconds.

- print()

- logs

- recompile in seconds

- stack traces

- waveforms

- timing closure

- resynthesize for minutes

- nanosecond timing bugs

Hardware isn’t really “debuggable” in the way software is. You are bound by physics and the hard constraints of silicon, and the margin for error is brutally small. When a signal misses a timing window even by half a nanosecond, the entire system can collapse. You can’t just scale up or throw more compute at the problem, you’re working within a fixed number of logic elements, a fixed amount of on-chip memory, and a fixed clock budget that everything must obey.

There were long stretches where we sat staring at waveforms for hours, trying to catch a single bit flipping at the wrong moment. It’s a level of granularity most software engineers rarely have to touch. You’re not just debugging code anymore; you’re negotiating with the machine itself, trying to convince the FPGA to accept your logic within its physical fabric. It’s mentally exhausting, occasionally frustrating, and completely unforgiving, but that’s also what makes it real.

Motivation

PyTorch isn’t slow because it was built badly. It’s slow because it was built for flexibility. Training needs dynamic graphs, autograd, and a huge surface area of features. For inference, you end up paying that overhead every forward pass. Talos takes the opposite approach: strip away anything that isn’t the math, and make the whole pipeline deterministic in hardware.

No runtime, no scheduler. Every operation has a known path and a known cycle cost, so the same input always produces the same timing and behavior.

Production inference is dominated by response time. Talos is built to minimize wait time by keeping data moving and compute scheduled at the cycle level.

Frameworks lean on big intermediate buffers. Talos uses a streaming pipeline to avoid storing entire feature maps between layers when it doesn’t need to.

Fixed-point math and purpose-built control remove general-purpose overhead. The result is less work per inference, less wasted movement, and tighter use of FPGA resources.

These are the questions that drove the design of Talos. We designed Talos with this in mind. Every part of the stack is optimized for inference, from how the weights are stored to how operations are scheduled and executed. It is not about being general purpose. It is about being efficient. That is why Talos can run models faster, using less memory, and in some cases even less power.

Going through this documentation you will find details on the architecture, design decisions, performance benchmarks, and how to get started with Talos. We hope you enjoy reading this. We will keep iterating and improving it over time.

Inference

What's Behind the Inference Pipeline

Talos' first inference pipeline was built around one radical simplification: *do only the math that matters, nothing else*. No runtime, no scheduler, no abstraction. Every operation is grounded in fixed-point arithmetic, every cycle is deterministic, every path through the hardware is known.

Model Specs and Training

The architecture is reasonably straightforward. The model has a single convolutional layer which takes a 28×28 grayscale input and applies 4 kernels of size 3×3 with no padding, producing 4 feature maps of size 26×26. A ReLU activation layer follows with a 2×2 MaxPool layer with stride 2 which reduces the dimensions to 13×13. The feature maps are then flattened into a vector of 676 values and passed through a single fully connected layer that maps directly to 10 output classes for each digit.

Given below is a diagram that shows the architecture of the model:

The Q16.16 Backbone

Training a model in PyTorch is great, but how do you actually transfer the weights to hardware? Unlike software, FPGA does not understand floating-point numbers. There is no native decimal point at the circuit level as everything is bits, and the hardware has no idea how to interpret them unless you specify.

The answer is fixed-point arithmetic and quantization, where you basically fix the position of the binary point at design time and agree on it everywhere. We use Q16.16 method, where every number is a 32-bit signed integer where the upper 16 bits represent the integer part (including sign) and the lower 16 bits represent the fractional part.

\[\underbrace{\,\overbrace{\text{sign + integer (16 bits)}}^{\text{bits31..16}}\;|\;\overbrace{\text{fractional (16 bits)}}^{\text{bits 15..0}}\,}_{32\ \text{bits total}}\]Converting between floating-point and Q16.16 is straightforward: a float is mapped into Q16.16 by computing:\[ \text{v} = \text{round}(\text{float} \times 2^{16}) \]

And the reverse is obtained by dividing by the same scaling factor:

\[ \text{float} = \frac{\text{v}}{2^{16}} \]The smallest representable step in this format is:

\[ \epsilon = 2^{-16} \approx 1.5 \times 10^{-5} \]which provides fine grained precision for most computations. Arithmetic in Q16.16 follows simple integer rules. Addition and subtraction are just standard 32-bit integer operations. Multiplication is performed exactly in 64 bits, then rescaled back to Q16.16 with a right shift by 16:

\[ z = \frac{x \times y}{2^{16}} = (x \times y) >> 16 \]And division is implemented by scaling the numerator before integer division:

\[ z = \frac{x}{y} = \frac{x << 16}{y} \]Overall, the representable range is:

\[ -32768 \leq x < 32768 \]which is wide enough for typical neural network inference while keeping the math exact, deterministic, and efficient to execute on hardware.

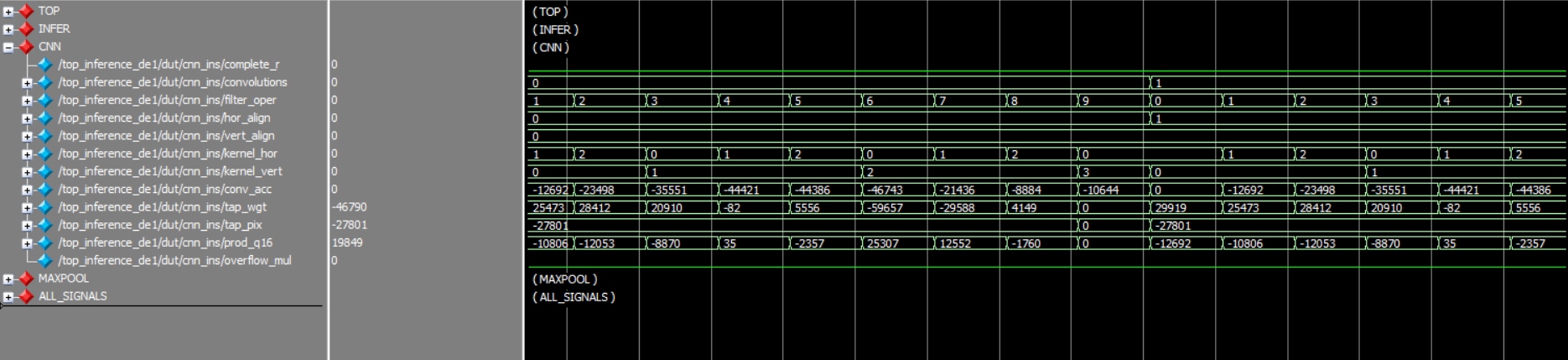

The Convolution Operation: A Repeated Loop

Convolution is the fundamental operation in CNN models (it literally stands for Convolutional Neural Networks), implemented in hardware as a multiply–accumulate (MAC) loop. Mathematically, the output at position (i,j) is computed as:

\[ y_{i,j} = \sum_{k=0}^{K-1} \sum_{l=0}^{K-1} x_{i+k,j+l} \cdot w_{k,l} \]In Talos' cnn module, we walk through the kernel first row-wise and then column-wise, multiplying each weight with the respective pixel on the image. Both the input feature map and kernel weights are in Q16.16 fixed-point format, so each multiplication is promoted to a 64-bit Q32.32 value, then scaled back to Q16.16 with a right shift of 16 bits. After going through all 9 taps of the 3×3 weights, we accumulate and store the result in the convimg bus that interfaces with the next layer. After we complete all kernel-level operations, we move the kernel forward (again first row-wise and then column-wise), repeating the procedure till the entire image has been convolved over.

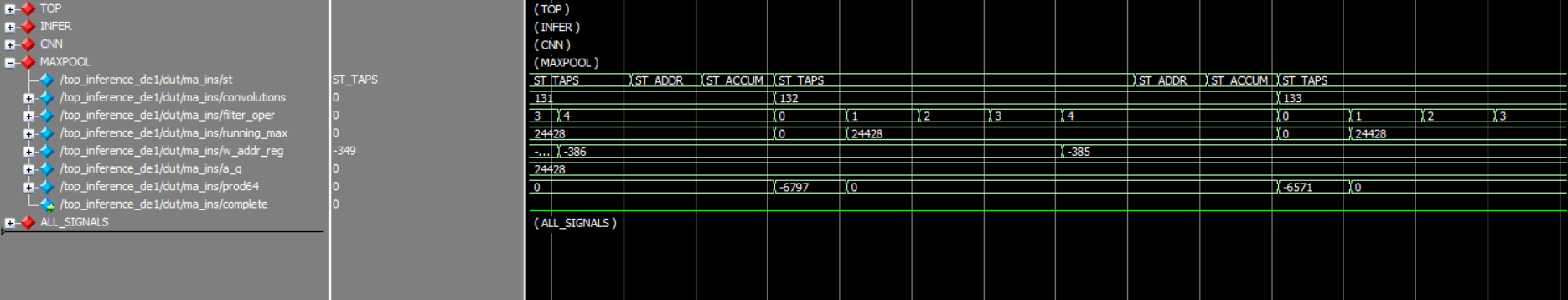

Maxpool and Fully Connected Layer: Just Compare and Multiply-Accumulate

Let's understand the Maxpool operation first. Maxpool is a simple operation that reduces resolution by taking local maxima. Mathematically, the output at position (i,j) is:

\[ y_{i,j} = \max \{ x_{2i,2j},\; x_{2i,2j+1},\; x_{2i+1,2j},\; x_{2i+1,2j+1} \} \]On the other hand, the fully connected layer is plain regression:

\[ y = b + \sum_{i=0}^{675} x[i] \cdot w[i] \]What makes the Talos maxpool module interesting is that it does both at once, while fusing the ReLU in for free. Instead of starting the window comparison from the first value, we initialize the running maximum at zero. This means that any window where all four values are negative just returns zero, which is exactly what ReLU does. No extra pass, no extra logic, just a smarter starting point.

From there the path is straightforward: compare against each of the four values in the 2x2 window, updating the maximum at each step. Three comparators and three muxes, that's all the operation needs.

After each kernel-level operation, the pooled value immediately gets multiplied by 10 weights in parallel (one per output neuron) and accumulated into 10 separate 64-bit registers. Both the activation and weights are in Q16.16, so each multiplication is promoted to 64-bit Q32.32, then right-shifted by 16 to scale back before accumulating. This fusion means we don't need to store the pooled feature map all at once, avoiding wide intermediate buses that would consume significant on-device resources.

Each of the 10 neurons has its own dedicated M10K ROM holding all 676 weights split across the 4 kernel operations. All 10 ROMs share the same address bus, so a single address lookup simultaneously retrieves one weight per neuron and thus, 10 MACs in the same cycle. The addressing is handled by a ker_sel parameter passed to the module. The ROM index for any given operation is simply:

Where convolution_operation increments row-wise then column-wise across the 13x13 pooled output, exactly mirroring how the CNN convolved across the image. Each kernel run maps to a clean 169-element slice of the ROM, and ker_sel just tells the module which slice to start from.

Every cycle counts...

One last thing worth noting. The original PyTorch model was trained with the standard CNN to ReLU to MaxPool ordering. In Talos, we flipped it to CNN to MaxPool to ReLU and that was a deliberate hardware decision. Applying ReLU inside the CNN module would mean one extra cycle per output pixel to check the sign and zero out negatives. Across a 26x26 output, that's 676 extra cycles per kernel pass, 2,704 across all four. By instead initializing the maxpool comparison at zero, we get ReLU for free without touching the CNN pipeline at all.

The math is equivalent for this model, the accuracy is identical, and we save thousands of cycles just by reordering two operations. This is the kind of optimization that doesn't exist in software - PyTorch doesn't care about cycle counts but as hardware folks, it's exactly the thinking that compounds into real performance gains.

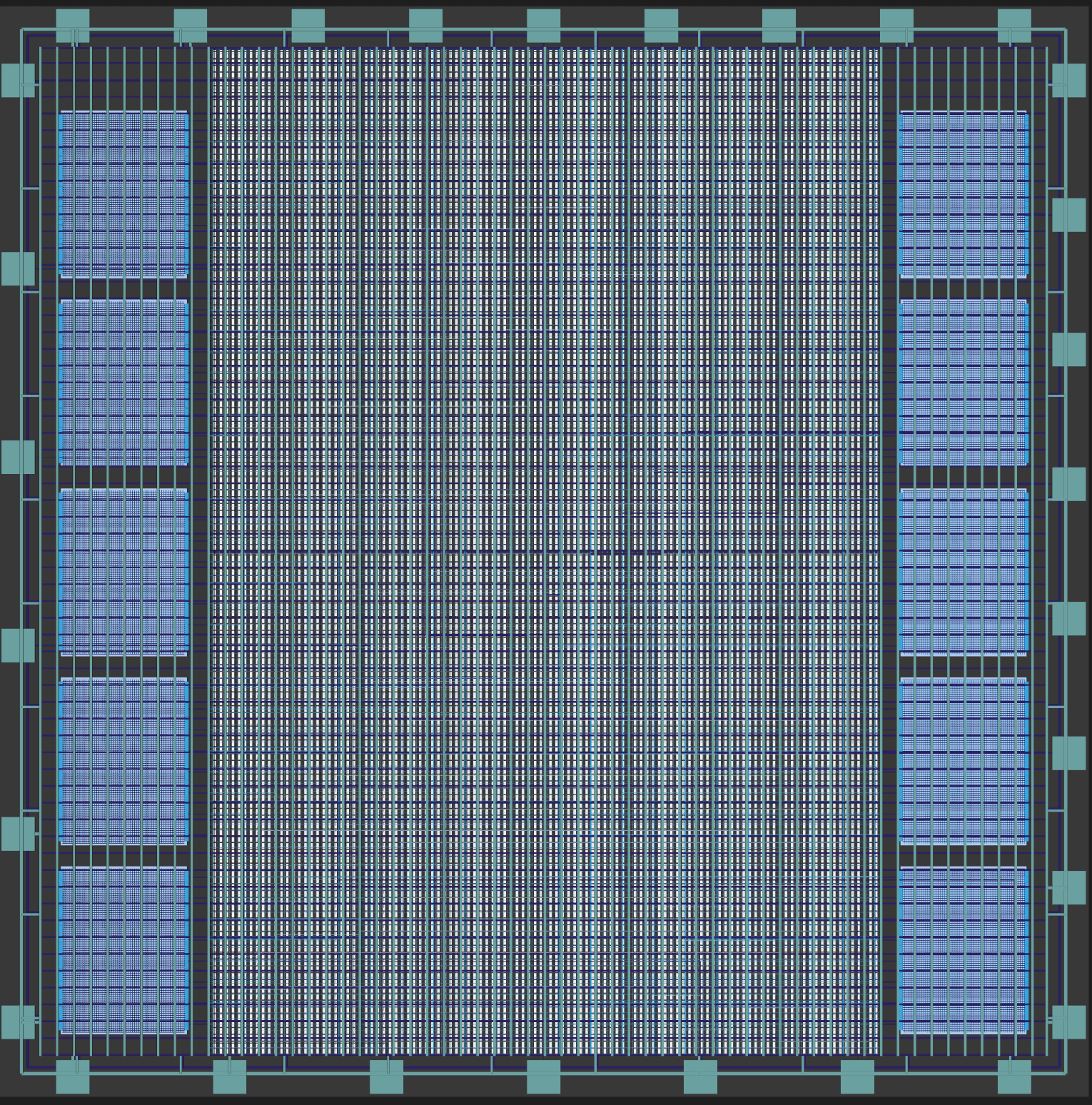

FPGA Architecture Deep Dive

Talos went through a series of architecture evolutions. Working with low-level digital design and FPGAs isn't just about getting the math right. It is about getting the math right within the hard physical constraints of the DE1-SoC. The FPGA has a fixed number of logic array bits, a fixed amount of memory, and a fixed routing fabric. You can't negotiate with it. Every architectural tweak was forced by those limits.

The First Attempt: Brute Force

Our first attempt was a not-so-genius brute force, running all four cnn and maxpool instances, one for each kernel, simultaneously in parallel. Logically, this is the fastest possible approach. In practice, however, it blew up the DE1-SoC, consuming nearly 4× the available LABs on the chip, making the design too big for the fitter to physically route it. We also initially had 10 instances of a neuron module with a massive port connecting directly to the maxpool outputs. The sheer width of that bus created severe routing congestion, and Quartus threw fitter errors before we even got to timing analysis. The design was simply too big to put on a chip as small as Cyclone V.

The beauty of constraints is that it forces you to think. Think about why something doesn't work and if the approach itself is wrong. In software, you can often brute force your way through and worry about optimizing later. In hardware, however, if it doesn't fit, it doesn't ship.

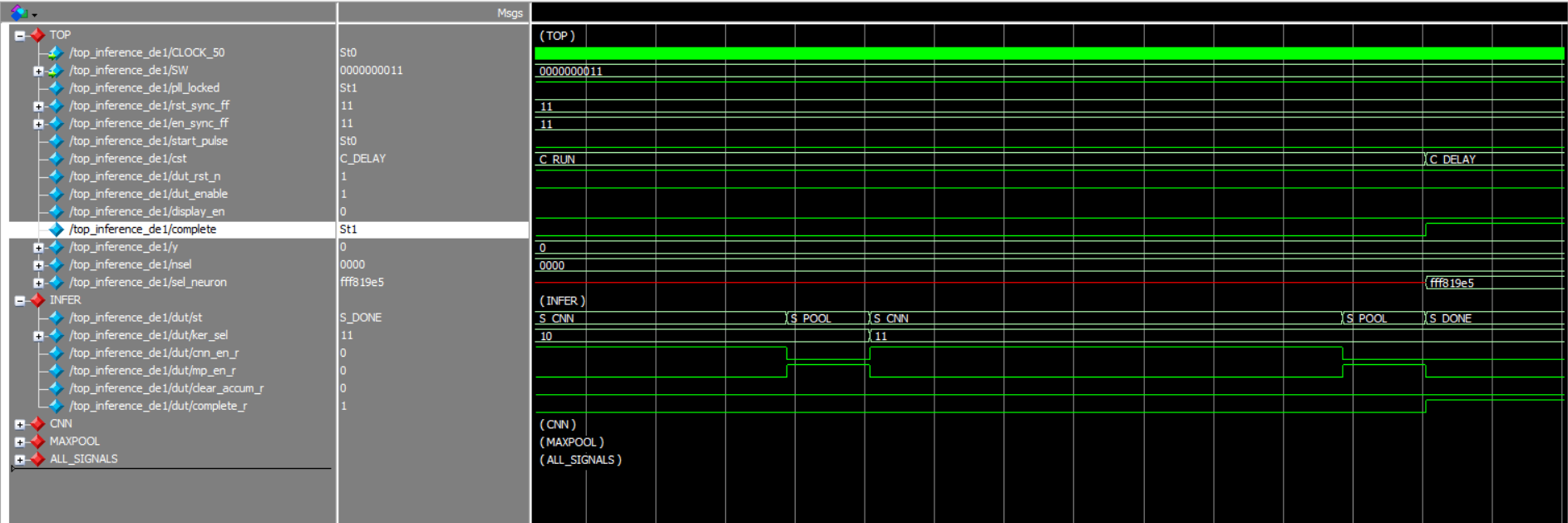

The Pivot: Time vs Memory

Hardware forces you to choose: it's either insanely fast or takes a whole lot of circuitry. The tradeoff between speed and area is worth noting. If it doesn't fit on the chip, no matter how fast it is, it's useless. Keeping overall memory footprint in mind while squeezing every cycle out at the module level, we decided to use a time-multiplexing architecture. Instead of four parallel instances, we used only one cnn module and one maxpool module, and ran them consecutively four times, one for each kernel. This is the architecture Talos ships with.

This is handled by a finite state machine in the inference module that cycles through the following states:

It starts by setting clear_accum to high in S_CLEAR to reset all 10 neuron accumulators. Then for each pass, the state first changes to S_CNN that sets cnn_en to high, which starts the cnn module and runs the convolution with the kernel selected by ker_sel. Once cnn_complete becomes high, indicating that all kernel operations have been completed, the state moves to S_POOL, where it sets mp_en high, and runs the maxpool module for that pass. After mp_complete goes high, it hits S_GAP, increments ker_sel to go to next kernel, resets the internal buses, and loops back to S_CNN for the next kernel. The neuron accumulators are never cleared between passes and thus, they keep accumulating across all four runs, which is exactly how the weighted sum across 676 inputs of the fully connected layer is supposed to work. After ker_sel hits 3, indicating all neurons have been completed, and the final pass completes, the state goes S_DONE and sets complete to high.

This approach alone allowed us to reduce the LAB (Logic Array Block) footprint to almost half of the initial design, showing that we were indeed moving on the right path.

Fusing Maxpool and the Fully Connected Layer

With the time-multiplexing architecture in place, we had one more key module to consider: how do the pooled results go between maxpool and the fully connected layer? The naive answer is to store them and feed the FC layer once all four passes are done. While we did do exactly this, the design was still too big to fit on the Cyclone V.

In the end, it all came down to the math. Logically, the fully connected layer is just multiply-accumulate and there's no reason to hold onto the activations at all. So we sort of cheated our way (it worked!) and fused the two modules together. The moment maxpool computes a pooled value, it immediately multiplies it against all 10 neuron weights and accumulates directly into the neuron registers. No bus, no extra device resource usage.

Cutting Resource Usage Further: Weights in ROM

Even with a single CNN and maxpool instance, the fully connected layer weights were still a problem. While the design was able to synthesize within the LAB limit of the DE1-SoC, the fitter was unable to perform optimized routing due to design congestion. Storing all 676 weights per neuron as port arrays meant Quartus had to synthesize them into distributed logic, creating massive routing overhead and pushing device utilization a bit too high.

The fix was relatively simple: move every neuron's weights into M10K ROM blocks using Altera's altsyncram IP, initialized from .mif files at synthesis time. Each of the 10 neurons gets its own ROM, all sharing a single address bus. This single change dropped overall on-device resource utilization to roughly a third of what it was, turning a design that couldn't fit into one that routed cleanly with timing to spare.

Priming the Pipeline

One of the subtle complexities of hardware design is latency management. When our control logic requests a weight from the on-chip ROM, the data doesn't appear instantly. There is a one-cycle delay.

If the arithmetic unit tries to use the data immediately, it will calculate garbage. To solve this, we implemented a "priming" mechanism. The state machine issues the read address, waits (primes) for one cycle to allow the ROM to access the data, and only then enables the multiply-accumulate unit. This ensures that the math is always performed on valid data.

The Journey

We didn’t build Talos just to make another accelerator. We built it to prove that we could, to see if we could take the abstract mathematics of deep learning and forge it directly into silicon, stripping away the layers of software abstraction that have become the industry default.

It was one hell of a ride. There were days where nothing made sense, where the simulation looked perfect but the hardware refused to cooperate. We lost count of the hours spent staring at waveform traces, hunting for thatone signal, a misplaced clock edge, an uninitialized register, a single cycle drifting out of alignment, that was quietly breaking the entire pipeline.

Hardware is unforgiving. It doesn’t care about your intentions; it only cares about physics and timing. But that’s also why it’s rewarding. When you finally isolate the bug, fix the race condition, and watch the pipeline flow correctly for the first time, it’s a feeling you rarely get from high-level programming.

Talos stands as a testament to that struggle. It’s the result of obsession, of refusing to compromise on performance, and of the stubbornness required to build something from first principles. If anything, we hope looking inside this system gives others the confidence to take on their own impossible problems.

Cocotb was great for fast iteration, but several real bugs only appeared once we stepped into full waveform inspection. Cycle-level visibility ended up being non-negotiable.

Use the Quartus timing analyzer to check for timing violations - its a lifesaver and worth the extra time to actually learn it.

The designs that held up best were the simplest ones - clean dataflow, explicit control, and deterministic timing instead of clever abstractions.

Off-by-one delays, unprimed ROM reads, and invalid data windows caused more failures than actual logic errors.

Appendix

Contribute

Talos is open source because custom hardware shouldn’t be a black box. If you want to explore the SystemVerilog, improve the flow, or fix docs, jump in. Open an issue, drop a PR, or just explore the hardware repo.

References

- AMD's Vitis AI - deep-dive reference for DPU infrastructure and FPGA deployment patterns.

- SamsungLabs' Butterfly Accelerator - for hardware optimization insights and mapping strategies.

- NCAA: Hardware Accelerator for CNNs - survey of FPGA accelerators for CNNs.

- Memory Hierarchy - for a broad overview of memory hierarchy.

- Embedded Memory - ROM Ports, RAM Ports user guide/docs.

- DE1-SoC Memory- examples of memory usage.